How I used AI pair programming to create a zero-dependency .NET reporting library in a few hours

Meta note: This blog post was written by Claude Sonnet 4.5 under my direction, which seems fitting given that the library it describes was also written by Claude under my direction. It’s Claude all the way down—except for the design decisions, which are all mine.

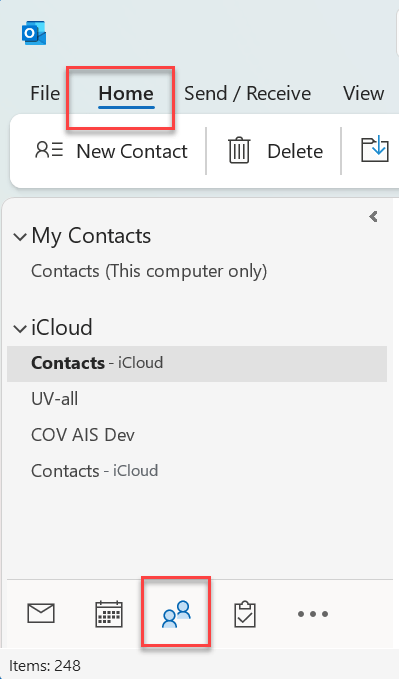

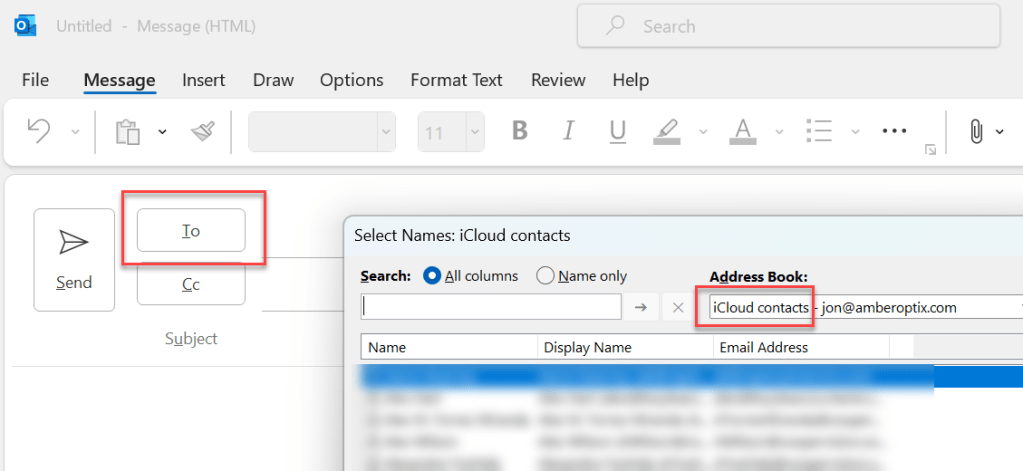

Meta meta note: this bit is written by me. This project and the blob post were started out of the necessity for a simple visual report that didn’t require the internet for support, and could be shared easily. I didn’t realise that single-file html pages could be made to produce such rich and illustrative reports. Had I tried to code the CSS or SVG code, or indeed any of the HTML, I would have spent days if not weeks trying to iron out the bugs. It seems that the AI tools are a perfect fit for this kind of problem and so I went from a domain-specific report to a general purpose version which has resulted in this project. I hope you enjoy reading or trying this project and maybe contributing to fixing problems or adding new features! – Jon.

The Problem: Too Simple or Too Complex

I needed to generate HTML reports from my C# applications. Not dashboards, not interactive web apps—just clean, professional-looking reports that I could email as attachments, open in any browser, or print to PDF. Think weekly team summaries, test results, audit logs, that sort of thing.

When I surveyed the .NET ecosystem, I found myself in a frustrating middle ground:

- Too simple: String concatenation or basic templating gave me HTML, but styling was painful and charts were basically impossible without pulling in JavaScript libraries.

- Too complex: Full reporting frameworks like Crystal Reports, Telerik Reporting, or SSRS were overkill. I didn’t need a report designer, a server, or a 50MB dependency tree.

- PDF-focused: Libraries like iTextSharp or QuestPDF generate PDFs beautifully, but I wanted the flexibility of HTML—something I could view in a browser, send via email, and convert to PDF if needed.

What I really wanted was something in between: a simple API that could produce a self-contained HTML file with decent styling and basic charts, without requiring internet access, external CSS files, or JavaScript.

That’s when I discovered something I hadn’t fully appreciated: HTML files can contain everything they need inline. SVG graphics, embedded styles, even base64-encoded images. A single .html file can be completely self-contained and still look professional.

Enter Claude: AI Pair Programming

Rather than spending days building this from scratch, I decided to try something different: I would design the API and architecture, and let Claude AI write the implementation. This wasn’t about having AI “do it for me”—it was genuine pair programming, where I provided the vision, constraints, and design decisions, while Claude handled the mechanical work of writing classes, generating SVG paths, and handling HTML encoding edge cases.

The entire project took a few hours of elapsed time, spread across multiple sessions of:

- Me describing what I wanted

- Claude generating code

- Me testing the output

- Me providing feedback and refinements

- Repeat

The result is CDS.FluentHtmlReports, a zero-dependency .NET library that does exactly what I needed—nothing more, nothing less.

Key Design Decisions

1. Fluent API from Day One

The first and most important decision was the API design. I wanted a fluent interface where you could chain method calls to build up a report naturally:

This wasn’t just aesthetic—it enforced a crucial architectural constraint: reports are append-only. You can’t go back and modify earlier sections. You build the document linearly, top to bottom, which keeps the implementation simple and the mental model clear.

Claude and I discussed various approaches (builder pattern, document object model, template-based), but the fluent API won because it’s intuitive for C# developers and naturally prevents complexity creep.

2. Zero External Dependencies

The library targets .NET 8+ and has zero NuGet dependencies. Everything it needs is in the .NET Base Class Library. This means:

- No version conflicts

- No security vulnerabilities in third-party packages

- No breaking changes when you upgrade

- Tiny footprint

The trade-off? I couldn’t use fancy chart libraries or CSS frameworks. We had to generate everything ourselves—SVG paths, chart layouts, color schemes, responsive CSS. Claude handled the tedious math for pie chart arc calculations and bar chart scaling.

3. Self-Contained Output

Every HTML file generated by the library contains:

- Inline CSS (no external stylesheets)

- Inline SVG charts (no image files, no canvas, no JavaScript)

- Base64-encoded images (if you add them)

- Print-friendly

@media print rules

This was non-negotiable. I wanted to generate a file, email it, and know it would look identical on the recipient’s machine—no missing assets, no broken links, no “this page requires an internet connection.”

How It Works: A Quick Tour

Here’s the example from the README that generates a complete weekly team report:

That’s it. One fluent chain produces a complete, styled HTML document with tables, charts, and formatting.

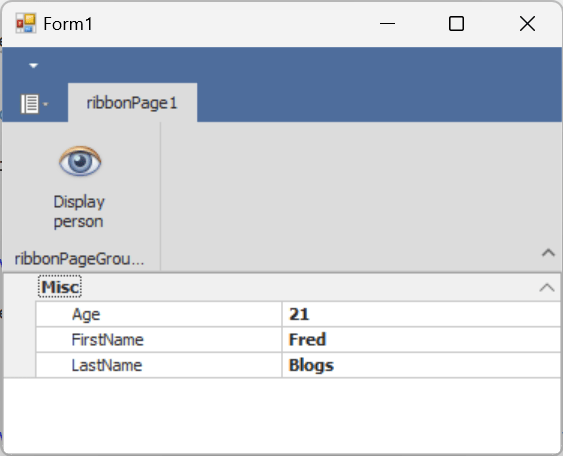

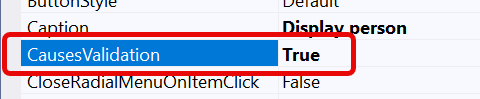

Tables with Reflection

The AddTable() method uses reflection to automatically generate columns from your object properties. You can pass in:

- Anonymous types (like the example above)

- POCOs (plain old C# objects)

- Records

- Any

IEnumerable<T>

Want conditional formatting? Pass a callback:

csharp

Need summary rows? Specify which columns to aggregate:

Charts Without JavaScript

This was the part I didn’t know was possible: you can generate decent-looking charts using pure SVG, no JavaScript required.

The library supports:

- Vertical and horizontal bar charts

- Pie and donut charts

- Single and multi-series line charts

All rendered as inline SVG. Claude handled the trigonometry for pie slices and the scaling math for bar charts. Here’s a simple example:

The SVG scales to the container width and prints cleanly to PDF. No external libraries, no network requests.

What Claude Actually Built

Let me be specific about what Claude wrote versus what I designed:

My Role (Design & Architecture)

- Defined the fluent API surface

- Specified the append-only constraint

- Decided on zero dependencies

- Chose which features to include (and which to skip)

- Tested output and provided feedback

- Made trade-off decisions

Claude’s Role (Implementation)

- Wrote the

Generator, TextRenderer, TableRenderer, and ChartRenderer classes

- Implemented reflection-based table generation

- Calculated SVG paths for pie charts (trigonometry for arc segments)

- Scaled bar charts and line charts correctly

- Handled HTML encoding and edge cases

- Generated the embedded CSS stylesheet

- Wrote print-friendly media queries

- Created the demo/test suite

The collaboration worked because I knew what I wanted but didn’t want to spend time on the how. Claude is exceptionally good at “write me a method that generates an SVG pie chart given these data points” but needs guidance on “should this API support in-place editing or be append-only?”

Brutal Honesty: What This Is NOT

Let’s be clear about the limitations, because this library was built to solve a narrow problem:

❌ Not a General-Purpose Reporting System

This isn’t Crystal Reports or Telerik. There’s no visual designer, no drill-down, no parameterized queries, no data binding to databases. It’s a programmatic API for generating static HTML.

❌ Not for Complex Layouts

Want pixel-perfect positioning? Multi-column grids? Overlapping elements? Use a proper PDF library. This library does simple, linear, top-to-bottom document flow.

❌ Not for Interactive Charts

The charts are static SVG. No tooltips, no zoom, no click handlers. If you need interactivity, use a JavaScript charting library like Chart.js or D3.

❌ Not a Replacement for Excel

If your users need to manipulate the data, export to Excel or CSV instead. This generates read-only reports.

✅ Perfect For

- Automated email reports

- Audit logs and test results

- Weekly/monthly summaries

- Server-generated status pages

- Anything you’d previously done with Word mail merge but hated the process

It fits a specific niche: you need a simple, good-looking, self-contained HTML report that you can generate from code and share easily. That’s it.

The Development Process

Here’s what the workflow looked like:

Session 1: “Claude, I want to generate HTML reports with a fluent API. Here’s what I’m thinking…” We sketched out the Generator class and basic text methods.

Session 2: “The table rendering isn’t working right with anonymous types. Also, I want to add summary rows.” Claude fixed the reflection logic and added aggregation.

Session 3: “I need charts. Let’s start with vertical bars.” Claude generated the SVG rendering logic. I tested it, found scaling issues, gave feedback. Iterate.

Session 4: “Pie charts would be useful.” Claude calculated the arc paths. I discovered the colors weren’t distinct enough, so we refined the default palette.

Session 5-ish: Polish—adding alerts, badges, progress bars, collapsible sections, print styling.

Total elapsed time: a few hours spread over a couple of days. Most of that was me testing, thinking about edge cases, and deciding what features to add versus what to skip.

The key insight: AI is incredibly effective when you know what you want but don’t want to write the boilerplate yourself. I could have written this library manually, but it would have taken days, and I would have made mistakes in the SVG math that Claude got right the first time.

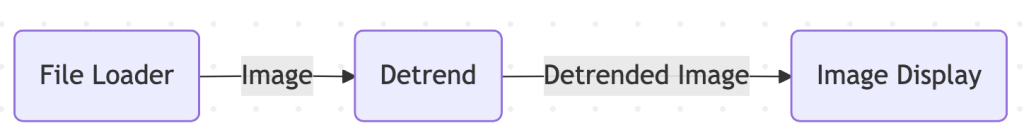

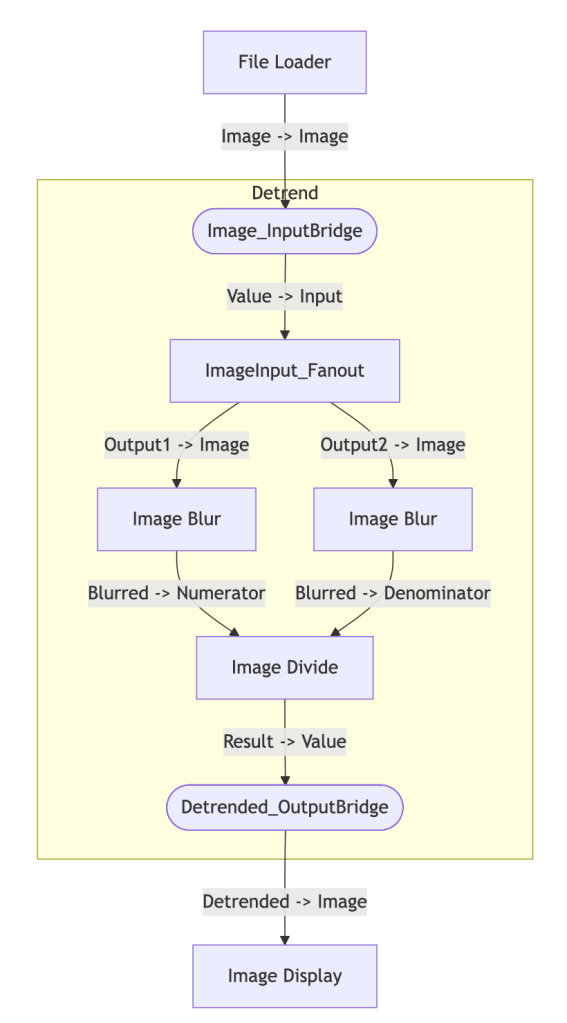

Architecture in Brief

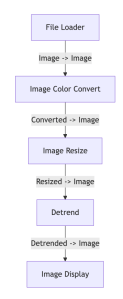

The library is intentionally simple:

All renderer classes are internal. The only public API is Generator, ReportOptions, and a handful of enums. This keeps the surface area small and makes the library easy to maintain.

Under the hood, everything writes to a StringBuilder. The append-only design means we never need to go back and modify earlier HTML—we just keep adding to the string until .Generate() closes the document and returns the final output.

Try It Yourself

The library is available on NuGet:

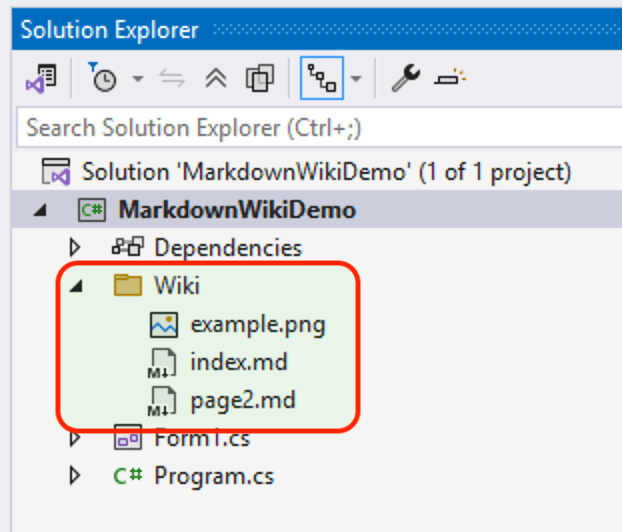

The source code and demo suite are on GitHub: https://github.com/nooogle/CDS.FluentHtmlReports

The ConsoleTest project generates a bunch of sample reports that demonstrate every feature. Clone the repo, run dotnet run in the ConsoleTest directory, and it’ll create HTML files in your Downloads folder.

Lessons Learned

1. Constraints Drive Simplicity

By deciding early that reports would be append-only and zero-dependency, we avoided a lot of complexity. No undo/redo, no object models, no dependency injection—just a straightforward builder that writes HTML.

2. AI Excels at Well-Defined Problems

Claude was phenomenal at “generate SVG for a pie chart” or “write a method to create an HTML table from reflection” because those problems have clear inputs and outputs. It struggled more with ambiguous questions like “should we support templates?” where the answer depended on product vision.

3. Good Defaults Matter

We spent time tuning the default color palettes, font sizes, and spacing so that reports look decent out of the box. Users can override these via CSS or the options API, but most won’t need to.

4. Self-Contained HTML Is Underrated

I genuinely didn’t know you could make such nice-looking documents with zero external dependencies. No CDN links, no font downloads, no JavaScript—just HTML and inline SVG. It opens instantly, emails cleanly, and prints perfectly.

When to Use CDS.FluentHtmlReports

Use it when:

- You need simple, automated reports from C# code

- You want to email reports as attachments

- You need print-friendly output (Ctrl+P → PDF)

- You don’t want JavaScript or external dependencies

- Your layout is linear/top-to-bottom

- You’re okay with static (non-interactive) charts

Don’t use it when:

- You need pixel-perfect layouts or complex positioning

- You need interactive charts with tooltips and zoom

- You want users to edit the data (use Excel/CSV instead)

- You need a visual report designer for non-developers

- You require advanced features like subreports or drill-down

Conclusion

Building CDS.FluentHtmlReports with Claude was an eye-opening experience. I got to focus on the design—what features to include, how the API should feel, what trade-offs to make—while Claude handled the mechanical work of turning those decisions into working code.The result is a library that does exactly what I needed: it generates clean, professional-looking HTML reports with a simple fluent API, zero dependencies, and self-contained output. It’s not trying to be everything to everyone—it solves a narrow problem well.If you’ve ever found yourself stuck between “too simple” and “too complex” when generating reports from .NET, give it a try. And if you’ve been curious about AI pair programming, this project is a great example of how it can work: you bring the vision and judgment, AI brings the speed and precision.The code is MIT licensed and available on GitHub. Pull requests welcome.

Jon is a C# developer working on vision systems and industrial applications. He writes about software development, image processing, and occasionally medieval Italian commerce.